Part 2. The first post showed what the technique produces. This post covers what it costs to produce, and what those numbers should change about how you fund your OT cybersecurity program.

TL;DR for OT leadership: The analysis described in Part 1 took about one analyst workday and less than two dollars in API cost. That is the full economic picture for an attack plan against the simulated operational industrial process. The cost of entry is not the constraint, and was never going to be. The constraint used to be domain expertise, which AI tools now supply on demand. The remaining constraint is analyst time, and that time keeps dropping as AI tooling improves. For OT leadership, that reality changes three things. First, assume this technique is already being used against configuration files that have left your environment, by people who do not need nation-state resources to execute it. Second, defender investment must be sized against that threat, not against a hypothetical one. An underfunded OT cybersecurity program is not saving money. It is subsidizing the attack. Third, the human validation gap that existed in this experiment, where some AI-identified attack paths did not reproduce on the physical kit, works in the adversary’s favor. An adversary runs everything and keeps what works. A defender cannot afford to let the first attack land.

What Part 1 showed, and what this post adds#

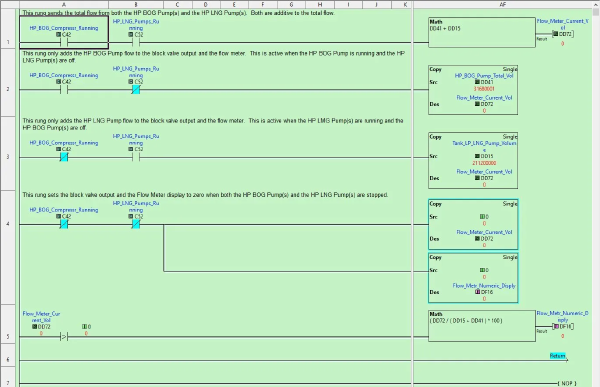

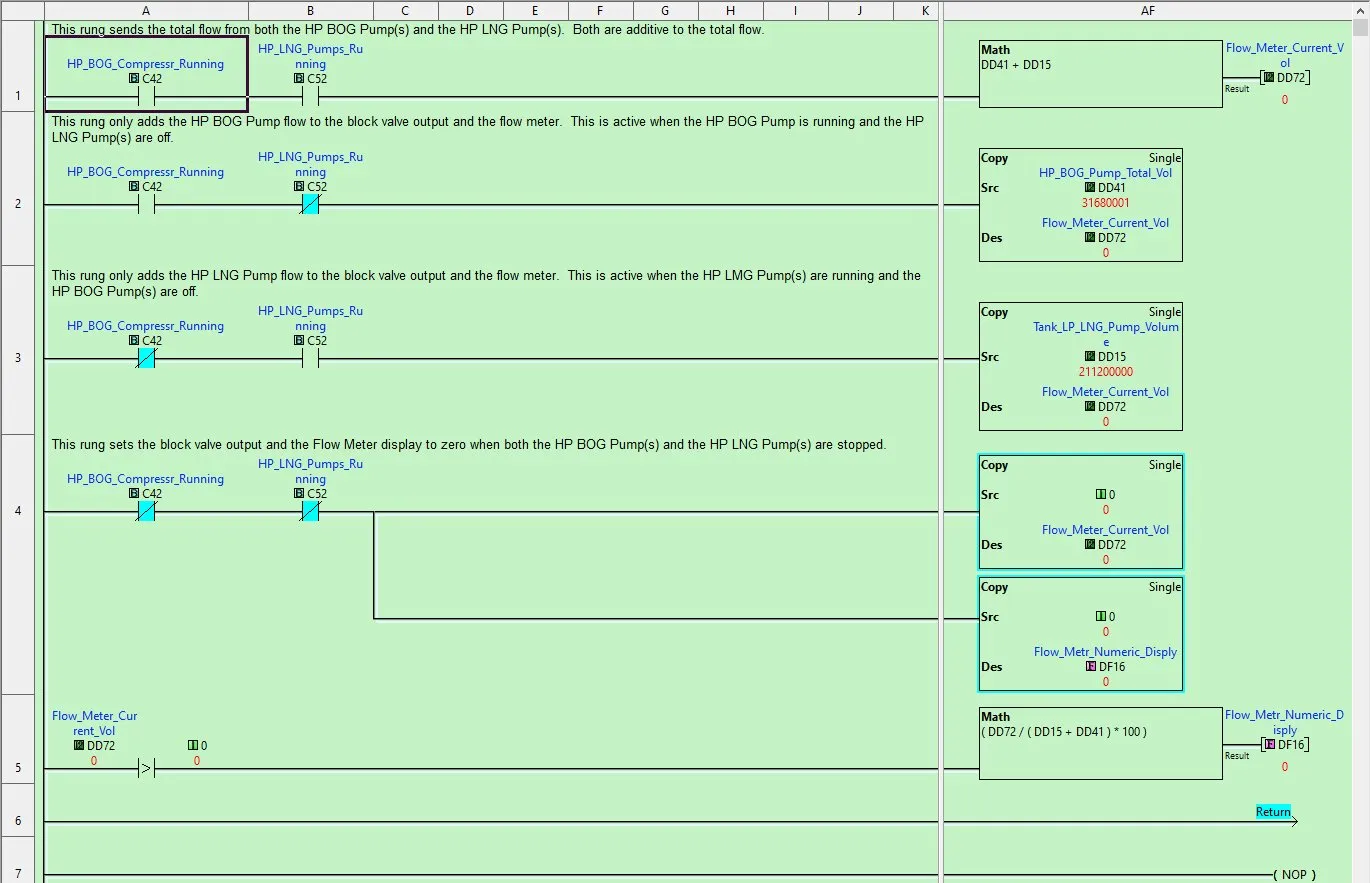

Part 1 covered what a production AI tool produces when you feed it ladder logic screenshots, a Modbus address export, and a few manufacturer reference PDFs pulled from the public internet. The process logic was regenerated and understood. Cross-subroutine dependencies were mapped to reveal telemetry workflow and dependencies. Multiple process-level attack paths were identified with protocol-level instructions for executing them. Two of those attack paths, tested against the physical kit, reproduced cleanly and happened to match exercises we teach in the SANS ICS613 course.

The first post did not cover the economics of the PLC logic analysis. What it cost to conduct the analysis, produce that output, and build operational understanding in time and money. Defenders and leadership need to understand this to understand the overall level of risk. The question I anticipate the most from industry experts is: “Sure, but how realistic is it for an attacker to do this?” It is as realistic as the numbers make it, and the numbers are small.

The inputs, again#

The full set of materials that produced the Part 1 output:

- Twelve PNG screenshots of ladder logic from the CLICK programming software

- One CSV file with 224 Modbus address rows representing process setpoints and configurations

- Ten PDF reference documents from Automation Direct’s public documentation site, totaling 48 pages

Nothing proprietary beyond what sits on the engineer workstation. Nothing that required network access to the PLC. Nothing that a threat actor could not assemble from a compromised engineer workstation plus a few minutes of public web research.

Where the time went#

The analysis took about fifteen to eighteen hours of analyst effort spread across multiple working sessions. That is a full analyst workday and then some.

The analyst profile matters here. I have deep familiarity with ICS equipment, Modbus and EtherNet/IP protocols, and the simulated LNG terminal operations. I have limited experience reading and writing Ladder Logic prior to conducting this analysis: I’m a breaker, not a builder. A significant portion of the analysis time was me learning the visual grammar of ladder logic on the job and how the AI tool was describing the process. A controls engineer who already reads ladder fluently would compress the same work to eight to twelve hours.

Out of that fifteen to eighteen hour total, the time broke down roughly like this. Five to six hours went to initial image capture, interpretation, and understanding each rung’s logic. About four hours went to writing up the AI analysis in a structured, reusable format that works as a repeatable AI analysis framework. About three hours went to cross-referencing every mathematical interpretation from images and Modbus addresses against the CSV to verify accuracy. The remaining three to four hours went to understanding what the AI tool produced and working through testing assumptions against the ICS613 student kit.

None of that is unique to my skill set or my kit. An adversary working with a similar artifact trail from a different PLC would spend their time in roughly the same places.

Where the tokens went#

The full analysis consumed approximately 212,000 tokens total, split between input and output. At current Claude Sonnet 4.6 rates of three dollars per million input tokens and fifteen dollars per million output tokens, that comes to about a dollar forty in API cost. GPT-4o costs about the same. GPT-4o mini is cheaper by a factor of twenty at roughly six cents, with meaningfully weaker output quality, but enough capability to handle parts of the job under close supervision.

Smaller-scoped analyses work out much cheaper. A single subroutine analysis lands around twenty thousand tokens for well under fifteen cents. A three subroutine cluster with a cross-reference summary lands around forty-five thousand tokens for about thirty cents. A workshop cohort of twenty students running a full single subroutine exercise comes in around two dollars and twenty cents for the whole class. The classroom example maps cleanly onto threat actor teams coordinating analysis across multiple devices in a target process.

This is the number that changes the threat conversation. The cost of entry is not the constraint, and it was not going to be. Even if the model were ten times more expensive, the analysis would still run under twenty dollars. The economic barrier to this technique does not exist.

Where the human still mattered#

Part 1 mentioned that only two of the AI-identified attack paths reproduced cleanly on the physical kit. That is the human validation gap, and it is worth being explicit about what it means.

The AI tool produced attack paths based on static analysis of the logic it was given. Static analysis does not capture timing behavior. It does not predict how comparison-driven coils respond to specific write sequences. It does not anticipate how physical analog inputs override PLC register writes during normal scan cycles. Some of what the AI predicted was technically correct logic that the hardware simply did not behave the way the logic suggested.

I caught those mismatches by running each proposed attack against the physical board. An adversary without a physical target to validate against will ship more attack paths than they should, including ones that fail.

But here is the part defenders need to sit with. The adversary does not need a high batting average. One attack path that reproduces cleanly in production is enough to cause operational impact. The AI tool gave me two attacks that worked and several that did not. In a defender context, that is a failure rate. In an adversary context, it is two successful attacks and some noise. The asymmetry favors the attacker.

The human is still in the loop, still mattering, still catching things the AI tool misses. That does not mean the AI threat is less real. It means AI plus a human produces even better results, and there will always be humans willing to iterate against live targets to validate and ensure intended outcomes.

What this means for adversaries#

The numbers in one place:

- About one analyst workday of time

- About a dollar forty in AI tooling cost

- Publicly available manufacturer reference documents

- Configuration files pulled from a compromised workstation

Output: a ranked list of attack paths against an operational industrial process, with protocol-level execution instructions, mapped to cross-subroutine effects, produced without any industrial domain expertise on the attacker’s part.

The skill profile required to execute this is not nation-state. It is not even advanced persistent threat. It is a moderately capable security analyst with a laptop. The reason this technique has not been seen in the open at scale yet is not that it is hard. It is that the artifact trail has to get out of the OT environment first, and most of the industry still treats that artifact trail as operational paperwork rather than process intelligence.

That window is closing.

What this means for defenders#

Part 1 covered the defender actions in detail. The numbers in this post should change how you prioritize them.

Cost arguments against OT cybersecurity investment do not hold up anymore. If the adversary cost of this technique is under two dollars, the defender cost of reasonable mitigation cannot honestly be argued as too expensive relative to the threat. Leadership that declines to fund configuration file protection, remote access monitoring, or penetration testing on cost grounds is making a risk decision, not a cost decision. Those are different conversations and should be framed accordingly.

Detection and response matter more than perimeter prevention at this point. The artifact trail has already left the building in most environments. The question is no longer how to prevent the exfiltration that already happened. The question is how to detect the remote access and protocol-level manipulation that uses what was exfiltrated. That is what the SANS 5 Critical Controls are structured to address, and it is what ICS613 teaches students to assess.

Assume the gap is already smaller than you think. An adversary reading Part 1 and this post has most of the methodology. The parts I did not publish are the specific attack procedures, and those are not hard to reconstruct for someone motivated enough. If your OT monitoring posture is not prepared to see a protocol-level manipulation attempt right now, that posture needs to move up the priority list.

A note for leadership

The numbers in this post should settle a question you have probably been asked in budget meetings. How realistic is it for attackers to actually do this? At under two dollars per analysis and a workday of analyst time, it is as realistic as any other commodity attacker technique. The question is not whether adversaries will use AI to understand your process. The question is whether your team will be ready to detect and respond when they do.

The white paper#

A consolidated white paper covering both posts is available for download. It includes the full methodology, the cross-subroutine analysis findings at a level appropriate for defenders, the workshop sizing for instructors considering similar exercises, and the defender recommendations from both posts in one place. It also documents where the AI analysis diverged from lab reality in more detail than either post covers, because that asymmetry is material for defender planning.

For teams looking to put this material in front of their own people, the ICS613 course covers the full penetration testing methodology that Parts 1 and 2 sit inside. The workshop I am running at the SANS ICS Summit 2026 puts students through a version of exactly this exercise under instructor guidance.

Go forth and do good things, Don C. Weber

Image credit: Ladder logic screenshot from the CLICK PLUS PLC programming software, captured from the SANS ICS613 student kit configuration.