Earlier this week I said AI was closing the knowledge gap for attackers faster than the industry was ready for. This is the technique, demonstrated.

TL;DR for OT leadership: Threat actors no longer need years of industrial experience to plan targeted attacks on your operational process. They need your configuration files (logic exports, address maps, HMI project files, manufacturer reference documents) and access to a production AI tool. With those, the AI tool supplies the process expertise the attacker does not have. It reads the logic, infers the physics, maps cross-system dependencies, and produces ranked attack paths with protocol-level instructions for executing them. The industrial-process knowledge gap that used to be a natural barrier against precision attacks has collapsed. For leadership, that changes three priorities. First, configuration files are process intelligence and must be protected like safety documentation. That means backup validation, integrity checks, and inventories of every copy wherever it lives, including copies held by vendors and contractors, and copies on the IT network. Second, remote access to engineering workstations deserves the same scrutiny you apply to your highest-value production systems, because that is where the intelligence lives. Third, the investment required for an adversary to weaponize this technique is trivial. A single analyst workday and a few dollars of API cost. Assume it is in use today, and fund your teams accordingly.

The technique, demonstrated#

In my last post I wrote about a workshop I’d be running at the SANS ICS Summit 2026. The plan was to feed ladder logic screenshots and extracted configuration data to an AI tool and see what it figured out. I said the AI explains the process, identifies what to target, and does it with current production models. A few people asked for the receipts. Here they are. And more importantly, here is the shape of the technique an adversary with your configuration files will run against your process.

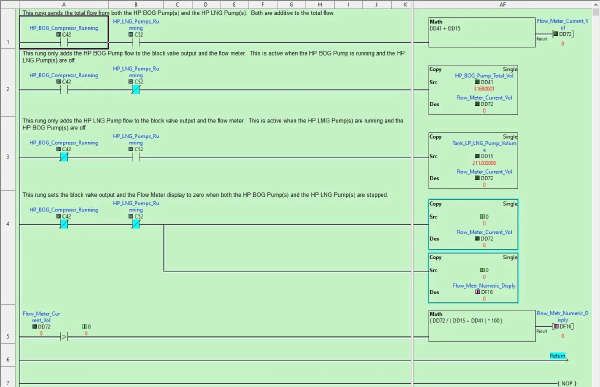

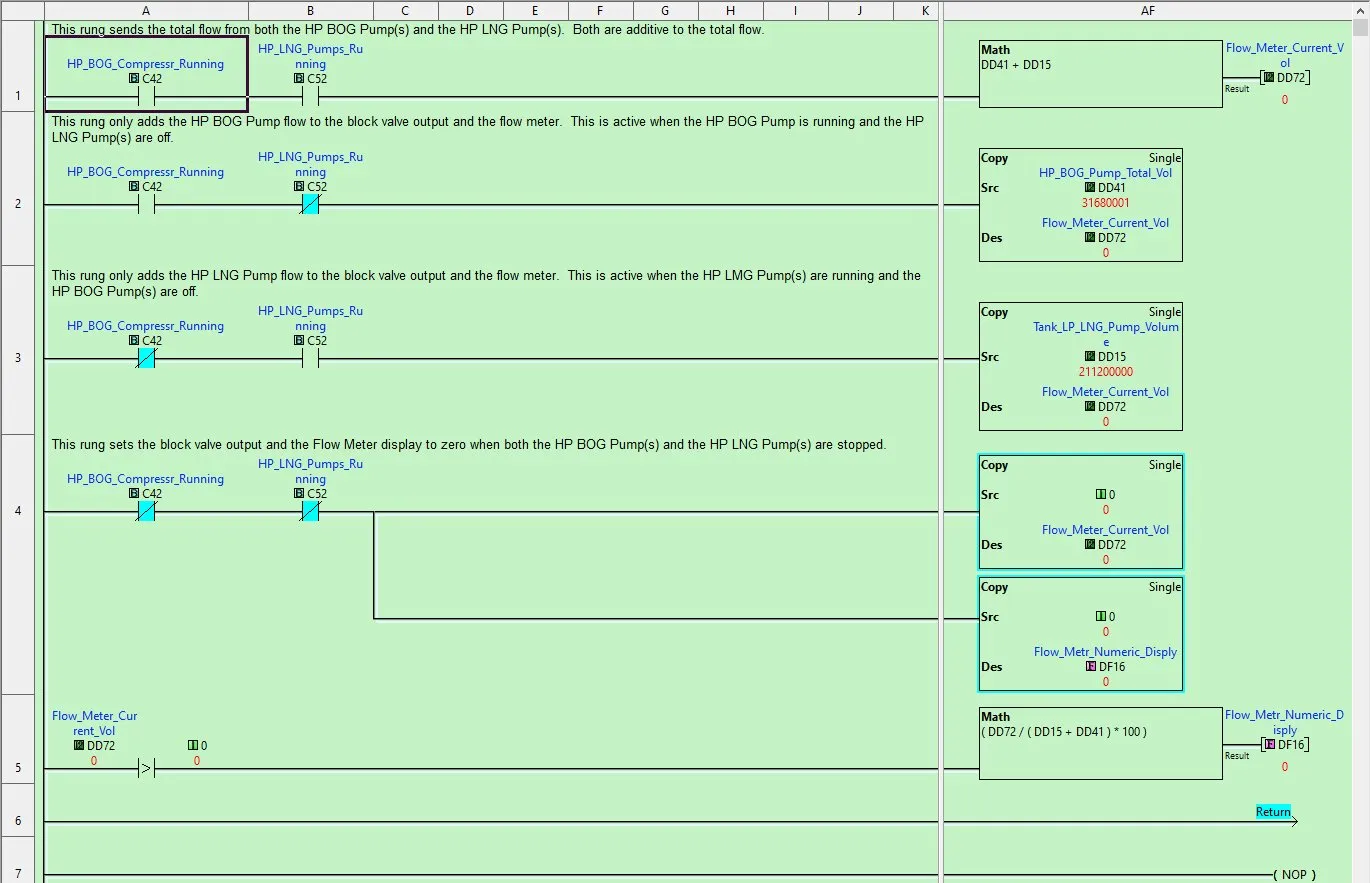

The test bench was the SANS ICS613 student kit. It’s a small physical board that simulates an LNG import terminal. Tank, pumps, a boil-off gas compressor, a flare, a block valve, an HMI, and a set of LEDs and a digital display that show you what the process is doing in real time. A CLICK PLUS PLC runs the whole thing. The ladder logic on it is actual LNG terminal logic, scaled down so students can reach out and touch it.

What I gave the AI was not the PLC. It was the artifact trail:

- Twelve PNG screenshots exported from the CLICK programming software (the main program and every subroutine)

- One CSV file with the Modbus address export, 224 rows

- A handful of CLICK reference PDFs that are already on Automation Direct’s public documentation site

That’s it. No network access to the PLC. No packet captures. No inside knowledge of how the simulation was built. Just the kind of material an attacker walks away with after pulling files off a compromised engineer workstation. The scenario laid out in CISA advisory AA26-097A: Iranian-Affiliated Cyber Actors Exploit Programmable Logic Controllers Across US Critical Infrastructure and the FrostyGoop Incident activity that preceded it.

A note about process logic files

The CLICK PLC does not export ladder logic in human readable configuration files. The CLICK configuration file is a binary blob, which forces analysts to generate images of the ladder logic diagrams. Other controllers and devices allow the exporting of their logic in XML files. It is much easier to analyze the logic in an XML file than it is to analyze a PNG image of ladder logic.

What the technique produces#

The specific findings are material my ICS613 students are expected to work through during the course, so I’m going to stay at the capability level here. The headlines are what matter for this conversation anyway. The AI tool:

Read the physics out of the math. I told the AI the simulator modeled an LNG terminal. What I did not tell it was how the ladder logic worked or what any individual subroutine was doing. One of the subroutines contains an arithmetic expression that derives tank temperature from tank pressure. The AI read the math, connected it to the auto-refrigeration behavior of cryogenic storage, and explained the relationship correctly. It pulled the LNG context I gave it together with the mathematical shape of the formula and produced the right physics interpretation. That is process knowledge lifted straight out of PLC code with the correct domain applied to it.

Traced signals across subroutines. The most useful single output wasn’t any individual subroutine analysis. It was the cross-subroutine map. Which coils are written in one subroutine and read in another, which registers cascade effects across multiple logic paths, where scan order determines whether the program is safe or unsafe. That is exactly the kind of analysis that takes a human controls engineer real time to build from scratch. The AI built it from twelve images.

Found dead code that still exposes live Modbus addresses. One of the subroutines in the project is never actually called from the main program. It’s inside the file, but the main program never executes it. The registers it would have written are still in the Modbus address export, with descriptive nicknames that imply live process values. A monitoring tool or HMI built from the export would read those registers and present stale values as current telemetry. The AI identified this by walking the main program’s call chain and noticing the subroutine wasn’t in it.

Produced attack paths with the Modbus procedures to execute them. Not in abstract terms. Actual write operations, actual values, actual expected behavior, ranked by operational impact.

Two attacks worked, and they were the right two#

I ran every attack path it produced against the physical kit. The outcome matters more than I expected.

Two of the attack chains reproduced cleanly on the hardware. The process responded exactly as the AI tool predicted. Physical LEDs changed state. The tank display moved. The flow meter reported what the AI said it would report. The kit was no longer reflecting reality, and an operator watching the board had no way to know.

Those two attack chains are the same two that we use in SANS ICS613 course. They are hands-on exercises we built for students to walk through during the course, under instructor guidance, as part of the penetration testing curriculum. The AI tool, working from twelve screenshots and a CSV, independently landed on the same attack paths we teach. That cuts both ways. It validates that the course exercises reflect what a capable analyst would identify from the artifact trail, and it validates that the AI’s analysis was technically accurate where accuracy mattered most.

The AI tool identified other attack paths that were not as successful. Some had correct logic but depended on timing or state conditions the AI couldn’t observe statically. Some predicted behavior that testing showed didn’t quite match. That’s the reality of the AI analysis. The AI tool is going to provide examples of opportunities that may be missed during manual analysis. Sometimes it will work, but sometimes technical and physical limitations will prevent success. While this may seem like a false positive, attackers don’t care. An attacker runs everything. What works, works.

The knowledge gap collapsed, and that is the real story#

This is what I was describing in the last post. Give the AI the process context and the artifact trail, and it does the rest. I told it the simulator modeled an LNG terminal. I did not tell it how the ladder logic worked, what each subroutine did, which registers mattered, or how the scan order affected behavior. All of that came out of the images and the CSV. The AI pulled the interpretation together from what it already knows about LNG process behavior, ladder logic grammar, Modbus addressing, and the design patterns that show up in controller code across every industrial vendor. It did not need me to teach it any of that.

What it needed from me was the artifact trail. Ladder logic screenshots. A Modbus address export. A few manufacturer reference PDFs already sitting on the public internet. The kind of materials that live on an engineer workstation, in a vendor’s project folder, on a backup share nobody audits, in an email attachment somebody forwarded to a contractor three years ago and hasn’t thought about since.

For an attacker with cybersecurity skills but no industrial background, that is not a closed door. It’s a key.

What OT defenders need to do#

None of what comes next is new. It just matters more now than it did last year.

Treat configuration files as process intelligence, not just operational documents. The ladder logic, the address map, the tag database, the HMI project file. This is your process expressed in text and numbers. Protect it the way you protect your safety documentation. Integrity checks. Version control with audit trails. Offline backups an attacker with domain credentials can’t overwrite. Inventories that list where every copy lives.

Keep OT data on the OT side. Configuration files belong on the engineering workstations that need them, not on the shared drive the IT team lifted into Microsoft 365 to make collaboration easier. Every time a process artifact crosses the boundary toward the enterprise, assume it has left your control. This includes the information vendors and integrators move in and out of your operational environments. Attackers don’t have to target you. They know who does your work for you, and they target them.

Monitor remote access like your process depends on it. Because it does. The engineer workstation with the vendor software installed is where process logic lives, where project files live, where the PLC passwords live. That endpoint is a high-value target in your OT environment for an attacker who wants what AI tools can now analyze. Remote access is one of the SANS 5 Critical Controls for a reason.

Validate process logic file backups. AI tools make it easy for threat actors to understand what the most valuable files are for a process environment. Generically this could be files with specific extensions, like .ckp for CLICK PLCs. AI-developed tools and malware, including ransomware, can now be specifically focused on the key project file assets that are critical for operations. We must anticipate that they are going to be successful, and we should be practiced at ensuring rapid recovery.

Assess what you actually have exposed. This is what ICS613 exists to teach, and it’s why we built the course around this kind of scenario. The workshop I’m running at the SANS ICS Summit 2026 puts students through a version of exactly this exercise.

A note for leadership

Your engineers know which systems hold the configuration files. Your security team knows whether those systems are monitored. The gap between the two is where the risk is sitting, and closing that gap is not a new project. It’s a priority call. Back your teams. Fund the assessment. Get the training. Threat actors are not waiting for us to understand AI tools.

Next post#

In Part 2 I’ll cover the actual numbers. How long this analysis took. How many tokens it consumed. What that translates to in API cost at current rates. What the AI got right, where it got things wrong, and why the human kept mattering through all of it. A consolidated white paper version of both posts will be available for download alongside Part 2. Methodology, findings, and defender recommendations structured for reproducibility.

Go forth and do good things, Don C. Weber

Image credit: Ladder logic screenshot from the CLICK PLUS PLC programming software, captured from the SANS ICS613 student kit configuration.